Using Cloud-based Load Balancing To Horizontally Scale Effectively

In most typical web-server application setups, one or websites are hosted upon a single LAMP (Linux Apache MySQL PHP) computer. This computer is provided by either a cloud based host as a node or slice, or as a shared server as a packaged offering.

For most scenarios, the above is perfectly fine and will run most low-medium traffic blogging, corporate, e-commerce web applications with little bother. However, the problem that is apparent is when low to moderate traffic expands to sustained high traffic or even bursts of high traffic.

In the age of social media, platforms such as Reddit, Twitter, Facebook can lead to a web link being shared across the world in seconds. The snowballing effect can really test the limitations of not only the software code and web server configuration, but the underlying network hardware, processing power and memory available.

The point at which the software running is no longer within the capability of the hardware provided is when the web link will no longer be reachable. On Reddit the phrase ‘Hug of Death’ is used to describe this exact scenario. Links shared to the popular pages of Reddit are seen by millions of people and when all of these people attempt to follow the link within a short period of time, the hosting server can become overloaded.

When a website is stored upon a single computer the only option may appear to increase the underlying hardware capability to satisfy demand. Using Rackspace as an example, it is possible to achieve this (increasing RAM, processing power etc) but this requires re-building of the server and leads to complete downtime as the server is rebooted.

Horizontally scaling is the answer to this problem and involves, adding additional computers alongside the main server as and when required. Conversely, additional computers can be removed as and when they are no longer required.

To achieve this, the following terminology should be understood:

-

Cloud Database

On a single computer/node/slice setup, installing MySQL or equivalent would fulfill the database requirement of the application. However, in order to efficiently scale the database needs to be separate from the server applications. We will use the Rackspace Cloud Database service. This provides a scalable and managed MySQL database service for a low hourly fee.

-

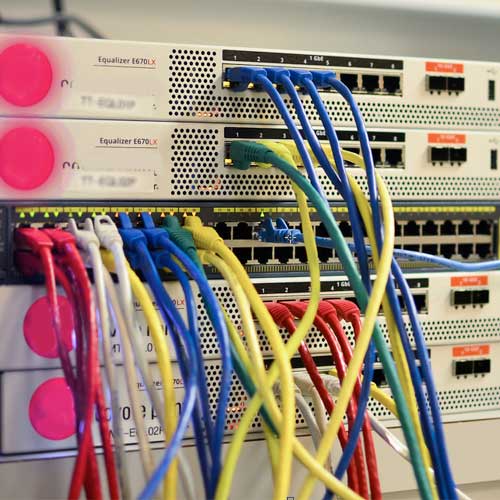

Load Balancer

A load balancer sits as the main point of contact between an external content request and the servers in the background actually fulfilling the request. Rackspace provide a dedicated IP load balancer that is used as the IP on the domain A records. Traffic is billed between external servers and the load balancer but internal traffic is free of charge. A single load balancer can support 20,000 sustained concurrent connections and in huge spikes up to 100,000 will be fine.

-

Master Server

A single computer/node/slice that is the main upload and configuration point. All changes made to this are propagated to any slave servers.

-

Slave Server

One or more slave servers are kept up to date by the master server so remain in sync with any update pushed. At times of increased load, additional slave servers are added from backups, the master server updated with the internal IP’s of the slave servers and everything synchronised. Additionally, the slaver servers are added to the pool of servers behind the load balancer.

-

SSH Keys

In order to allow the master server to automatically connect and maintain the slave servers, a public key is created on the master server and copied to the slave server(s).

-

Replication (Lsync)

Lsync is used to do the heavy lifting of checking for updated files and copying across changes between the master and slave servers.

-

Reverse Proxy Server (Varnish)

Varnish is an application used as the main port of call on the slave servers. It responds to incoming requests from the load balancer and decides (based upon rules set) whether to reply directly or relay the request back to the master server. It is useful when there are certain parts of the web application that must update files and therefore should be run by the master server.

-

Image Backups

Rackspace allows the creation of full node (we’ll use this term from now on instead of computer) images. These images can be created at any stage and then used to re-deploy a live instance of the the configuration within the image. We will use image backups to create duplicate slave servers quickly – relying upon Lsync to keep them updated.

-

Content Distribution Network (CDN)

A final thing to note is the use of a CDN for uploads of static files, which are possibly accessed frequently. Rather than serve these from the same nodes responsible for processing application data, it is beneficial to hand-off the responsibility to a CDN. This would require knowledge of uploading files directly to a CDN either manually or programmatically within the web application.

-

Optional: Memcached (Fast Key-Value Based Storage)

An additional option for helping with high traffic scenarios is to utilise caching where possible to minimise database hits. We will go through installing a shared node for Memcached.

So, let’s get started. Read through the following collection of step-by-step.

- Converting a single node MySQL application to a Cloud Databases Instance

- Copying data from existing MySQL databases to a cloud database instance.

- Optionally create a shared Memcached instance in the cloud to cache popular database queries and more.

By this stage, we have created a Cloud based database instance and copied existing databases across. Any application code should also be updated at this point to point to the Cloud databases in order to prevent the need for a future synchronisation of data.

We have also optionally created a shared instance hosting the memcached application. Again, any application code using the caches should be updated to point to the new shared ip.

The next stage is to create the actual server setup. This includes the following:

- Create a Master Server.

- Create an initial Slave/Clone Server.

- Creating a Load balancer to place infront of the servers.

Read the full step-by-step for creating servers for load balancing.

0 comments

Login or Register to post comments.